data privacy risks

📌 Disclaimer: This article is NOT against AI. AI is an incredible learning tool that can help your child succeed. The goal here is simple: teach kids how to use AI safely, just like you taught them to look both ways before crossing the street. AI is not the enemy. Careless data sharing is.

You hear it from the kitchen. The rapid click of a keyboard. A pause. Then your teenager's voice: "I'm just doing homework."

They are not lying. They are using AI. And in 2026, that is normal.

Schools have not fully caught up. Teachers are unsure. Parents are relieved that someone is finally helping with algebra.

But here is the question no one is asking:

What data did your child just type into that free AI tool?

A recent study found that 57% of employees use personal GenAI accounts for work without telling their employers. They paste internal documents, customer names, and confidential strategies into free chatbots.

Teenagers do the exact same thing. Except instead of trade secrets, they are pasting your family's private information.

Let me show you what is at risk.

The Homework That Exposes Your Family

Your child opens a free AI tool. They type their assignment. But assignments are rarely just questions.

Real examples of what teenagers paste into AI:

1. "Write about my family's trip to Disney World last June. Include my mom's name (Lara), my dad's name (Michael), and my little brother's nickname (Buddy)."

2. "Help me solve this word problem about our monthly rent. Our rent is $2,100 and we live in Austin, Texas."

3. "Explain why my mom was late to work. She takes medication for her thyroid and overslept."

4. "Write a story about a girl my age. Use my name (Emma), my school (Lincoln Middle), and my pet's name (Luna)."

5. "My dad's birthday is coming up. He was born on March 15, 1980. Give me gift ideas."

Read those again. Slowly.

Every single one contains personally identifiable information (PII). Names. Locations. Medical conditions. Birthdates. School names. Pet names (which are often security answers).

Your child is not leaking data. They are handing it over willingly.

What Free AI Tools Actually Collect and Store

Parents assume AI tools work like a calculator. Type in numbers. Get an answer. Nothing saved.

That is dangerously wrong.

Most free AI tools collect:

Everything you type – Every prompt, every follow-up question, every correction. The conversation history is saved indefinitely unless you manually delete it and the provider actually honors deletion.

Account information – Email address, name, payment method (if upgraded), device type, IP address, location.

Usage patterns – How long you use the tool, time of day, which features you click.

Files you upload – If your child uploads a PDF worksheet, a photo of a handwritten essay, or a screenshot of a textbook page, that file is now on the provider's servers.

Feedback data – When your child clicks "thumbs up" or "thumbs down" on an answer, that click is recorded along with the answer itself.

One major AI provider's privacy policy explicitly states that it may use user-generated content to improve its models. That means your child's English essay could become training data for the next version of the AI.

And here is the part most parents miss: Even if your child uses a "free" account, the company still needs to make money. That money comes from investor funding and eventual paid subscriptions. In the meantime, your child's data is the product.

The Three Data Leak Scenarios Parents Never Consider

Most parents worry about hackers stealing data. That is not the main risk here. The real risks are different and worse.

Scenario 1: The Permanent Record

Your child types something embarrassing, private, or incriminating into an AI tool. They delete the chat. They close the browser.

But that conversation still exists on the company's servers. Depending on the provider's retention policy, it could stay there for months or years. A future data breach could expose it. A future third party may be able to gain access through a subpoena.

Your child’s “temporary” curiosity becomes a permanent digital footprint.

Scenario 2: The Security Question Goldmine

AI tools ask follow-up questions. "Tell me more about your pet." "What street did you grow up on?" "What was your first car?"

These are common security questions for banking, email, and social media accounts.

Your child answers innocently. They think the AI is being friendly. In reality, they are documenting the exact answers that would allow someone to reset your passwords.

Now imagine a data breach. A hacker gets your child's chat history. They now have your mother's maiden name, your first pet's name, and the street you grew up on. They can call your bank and pass verification in minutes.

Scenario 3: The Targeted Phishing Attack

An attacker cannot directly access AI chat logs. But they can infer.

If your child posts on social media about using "an AI tool" for homework, an attacker knows there is a high-value dataset associated with your family email address. They can then craft a phishing email that references the AI tool.

"Dear Parent, we noticed unusual activity on your child's AI account. Please click here to verify."

The email looks real because it references something your family actually uses. Your guard drops. You click. You lose your login credentials.

Why Teens Are the Perfect Data Leak Vectors

Adults know (or should know) not to paste sensitive information into unsecured tools. Teens do not have that instinct.

Teenage data-sharing patterns that create risk:

1. They use personal accounts for everything, not school-managed accounts

2. They do not read privacy policies (most adults do not either)

3. They assume free tools are safe because "everyone uses them"

4. They share context freely, not realizing context is data

5. They collaborate, meaning one child's leak becomes their friend's leak

6. They use the same email and password across multiple tools

Your teenager is not malicious. They are just efficient. And efficiency, combined with free AI tools, is a privacy disaster waiting to happen.

The Workplace Parallel: 57% Already Do This at Work

The statistic mentioned earlier is real. Fifty-seven percent of employees use personal GenAI for work tasks. Salespeople paste customer lists. HR staff paste employee reviews. Engineers paste proprietary code.

Companies are scrambling to block personal AI accounts on work devices. But employees still use their phones. Their home computers. Their personal laptops.

Your child is doing the same thing at home. The difference is that your child does not have trade secrets. They have your family's secrets.

If a company discovered an employee had pasted customer Social Security numbers into a free AI tool, that employee would be fired immediately. Maybe sued.

Your child is doing the equivalent in your living room. And no one is stopping them.

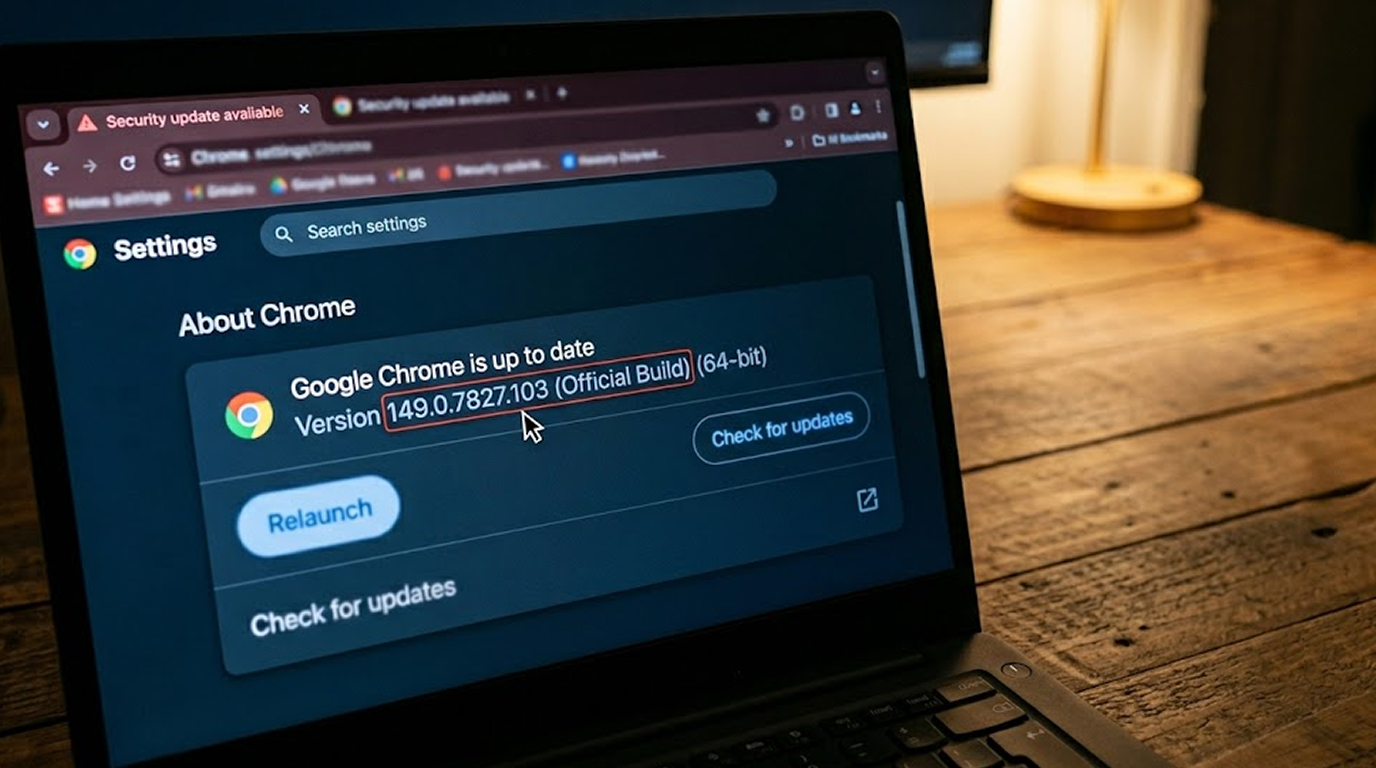

How to Check What Your Child Has Already Leaked

You cannot delete what is already out there. But you can assess the damage.

Step-by-step audit for parents:

1. Ask to see their AI chat history

Most tools keep a sidebar of all past conversations. Scroll through. Look for mentions of family names, addresses, schools, birthdates, medical conditions, financial information, and security question answers.

2. Check which accounts they use

Are they using a personal Gmail? A school email? A throwaway account? Personal accounts have fewer protections than enterprise or school accounts.

3. Review the privacy policy of each tool they use

Search for phrases like "data retention," "model training," "third-party sharing," and "deletion requests." Write down what you find.

4. Request deletion where possible

Most AI providers allow you to delete chat history. Some allow you to request full account deletion. Few guarantee that deleted data is removed from training models.

5. Change your security questions

If your child mentioned your pet's name, your mother's maiden name, or your childhood street, change those answers immediately. Use fake answers stored only in your password manager.

Practical Rules for Safe AI Homework Use

You cannot ban AI. Your teenager will use it anyway. So give them safe ways to use it.

Rule 1: Create a family AI account

Do not use personal accounts. Create one shared email address specifically for AI tools. Do not use that email for anything else. This limits the data attached to that account.

Rule 2: Establish the "No Real Names" rule

Your child can ask the AI for help. But they cannot use real names. "My mom" instead of "Lara." "My school" instead of "Lincoln Middle." "My pet" instead of "Luna."

Rule 3: Use school-provided accounts when possible

Many schools are now purchasing enterprise AI accounts for students. These accounts have data protection agreements that prevent the provider from using student data for training. Ask your school what they offer.

Rule 4: Delete chats after every session

Make it a habit. Finish homework. Delete the conversation. Do not rely on the provider to delete it automatically.

Rule 5: Never upload documents with personal information

If your child needs to upload a worksheet, first remove any visible names, addresses, or ID numbers. Use a photo editing tool to black out personal information.

Rule 6: Have the privacy talk

Your child needs to understand that typing something into an AI is like shouting it in a public library. It may be recorded. It may be repeated. It never truly disappears.

What to Do If You Discover a Leak

Do not panic. Follow these steps in order.

Immediate actions:

1. Delete the specific chat conversations that contain sensitive data

2. Delete the entire account if you are concerned

3. Change any security questions that were exposed

4. Monitor your financial accounts and credit reports for unusual activity

If the data included financial information:

Contact your bank. Request a fraud alert on your accounts. Consider freezing your credit.

If the data included medical information:

Contact your health insurance provider. Ask if there are any unauthorized claims. Review your explanation of benefits statements.

If the data included identity documents:

File a report with the Federal Trade Commission (FTC) at IdentityTheft.gov. Follow their recovery plan.

The Bottom Line for Parents

Your child is not doing anything wrong. They are using the tools of their generation. The problem is not the child. The problem is that free AI tools have privacy models designed for adults who know better.

Your teenager does not know better. They have never been taught.

So teach them. Not with fear. With facts.

Show them this article. Walk through the scenarios. Make the rules together. And then check in regularly.

The goal is not to stop AI use. The goal is to stop data leakage while letting your child learn and grow.

Because the real risk is not that your child uses AI for homework. The real risk is that no one told them what not to type.

Do not let that be your family.

FAQ Section

1. Can ChatGPT or other AI tools really see what my child types?

Yes. Everything your child types into a free AI tool is sent to the provider's servers. Those conversations are stored, analyzed, and may be used to improve the AI model.

2. What specific family information is most at risk from AI homework data privacy risks?

The highest risks are full names, home addresses, school names, birthdates, medical conditions, financial information (rent, bills, income), and answers to common security questions like pet names, mother's maiden name, and childhood street names.

3. Is it safer for my child to use a school email address with AI tools?

Usually, a school email has a stronger data protection agreement compared to a personal account. However, most of the free AI tools will not distinguish between the two types of accounts. If your school has an enterprise AI license, then your child may have their data protected from being used for training. This can be confirmed by checking with the IT Department of your school about their policies.

4. For how long can my child's chat history be stored in AI tools?

This is a question with many different answers depending on who your child is using as a provider. Some providers will store chat history indefinitely unless you go through the process of deleting it. Others will delete any chat history after 30 days of not doing anything with the account. In addition, few providers will delete information from training models right after they receive an individual's request to delete their data, which means you will need to read through each provider's privacy policy.

5. What's the number one rule for children who are using AI to help with their homework?

Don't use actual names, addresses, or other personal information. Instead, use placeholders such as "my mom," "my school," or "my pet." And if the AI does not need actual information, even if there were no placeholders available, don't give it to the AI. By following this one rule, most data leakage incidents can be prevented.